Model Collapse Isn’t an AI Problem—It’s a Human One

Why blaming AI alone for systemic failures misses the real issue: deliberate institutional opacity.

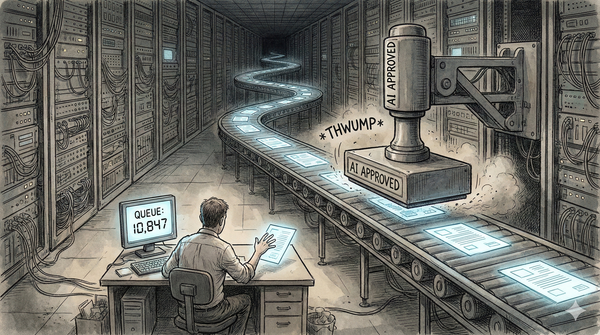

Every day, crucial decisions impacting your finances, your health, and your rights are increasingly being made by AI systems whose logic is hidden behind layers of complexity. When these systems inevitably fail or discriminate, institutions quickly blame the algorithm, washing their hands of responsibility. But the truth runs deeper: what we call "model collapse"—when AI models degrade due to training on flawed data—isn't merely technical. It’s rooted in human choices to maintain deliberate opacity and plausible deniability.

In our newest analysis, we uncover why institutions prefer black-box AI, the human strategies behind algorithmic secrecy, and the devastating consequences this approach has for society.

Institutional Opacity as a Strategic Choice

Organizations have long hidden behind expert consultants to deflect blame. Now, AI has amplified that same tactic:

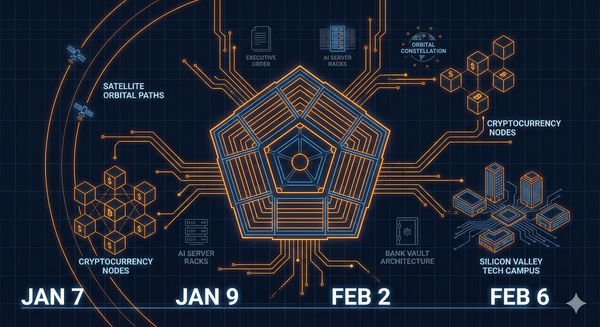

- FAA & SpaceX: Employees questioning AI-driven safety shortcuts face intimidation—justified by opaque predictive analytics.

- DOGE Credit Card Incident: 100,000 federal employee accounts closed overnight based on undisclosed AI criteria, leaving users without recourse.

- Educational Gatekeeping: Institutions respond to marginalized groups rapidly adopting AI tools by labeling these resources as misconduct rather than empowerment.

These aren’t isolated mistakes—they’re deliberate outcomes of a strategy to weaponize AI’s inherent complexity.

Model Collapse: The Hidden Consequence

What institutions overlook is how quickly AI systems degrade when trained repeatedly on their own flawed outputs:

- Algorithms produce increasingly biased, less accurate results over time.

- Institutional secrecy prevents early detection, ensuring errors compound silently.

- Public trust erodes as failures emerge only after catastrophic outcomes occur.

Opacity transforms technical failures into human tragedies.

Dig Deeper: The FakeSoap Podcast

Explore the layers beneath AI’s algorithmic opacity crisis in our latest episode. We discuss real-world cases, institutional incentives, and actionable steps to restore transparency and accountability:

Solutions: Transparency, Auditing, and Education

There's a clear path to accountability and trust:

- Independent Algorithmic Audits: External reviews of high-stakes AI.

- Explainability Requirements: Clear justifications for decisions affecting human lives.

- Public AI Literacy: Equipping people with the knowledge to question, challenge, and understand algorithmic decisions.

It’s time institutions choose transparency over strategic ambiguity.

Final Thought: Reclaim Human Responsibility

Model collapse is not inevitable. It’s a symptom of deliberate choices—choices we can reverse by demanding openness and accountability now. Let’s act before the algorithmic collapse becomes societal collapse.

Join the conversation. Demand transparency. Share this insight.